Rocket Pro Navigate: Conducting Rapid Research to Uncover AI Use Cases for Mortgage Brokers

Rocket Mortgage | UX Research Intern

May 2025 - August 2025 • 8 week timeline • UX Researcher

Problem

Rocket Pro Navigate was a rapidly developed LLM pilot designed for mortgage broker workflows, but the team was operating on assumptions rather than validated user behavior. Without understanding how brokers engage with AI tools, any iteration risked building on a shaky foundation.

Goal

We needed to understand if mortgage brokers would organically integrate Pro Navigate into their workflows, what value they were finding, and where the experience was falling short, all within a compressed timeline that demanded lean, continuously shared insights.

My Role

I conducted a survey to gather participant demographics for our pilot group, then held interviews to follow up on their experiences after a week-long trial period. These interviews informed early design iterations of Rocket Pro Navigate that were included in its release on October 1st, 2025.

Tools:

Figma

dScout

Azure DevOps

Methods:

User Interviews

Surveys

Diary Study

Lean Agile

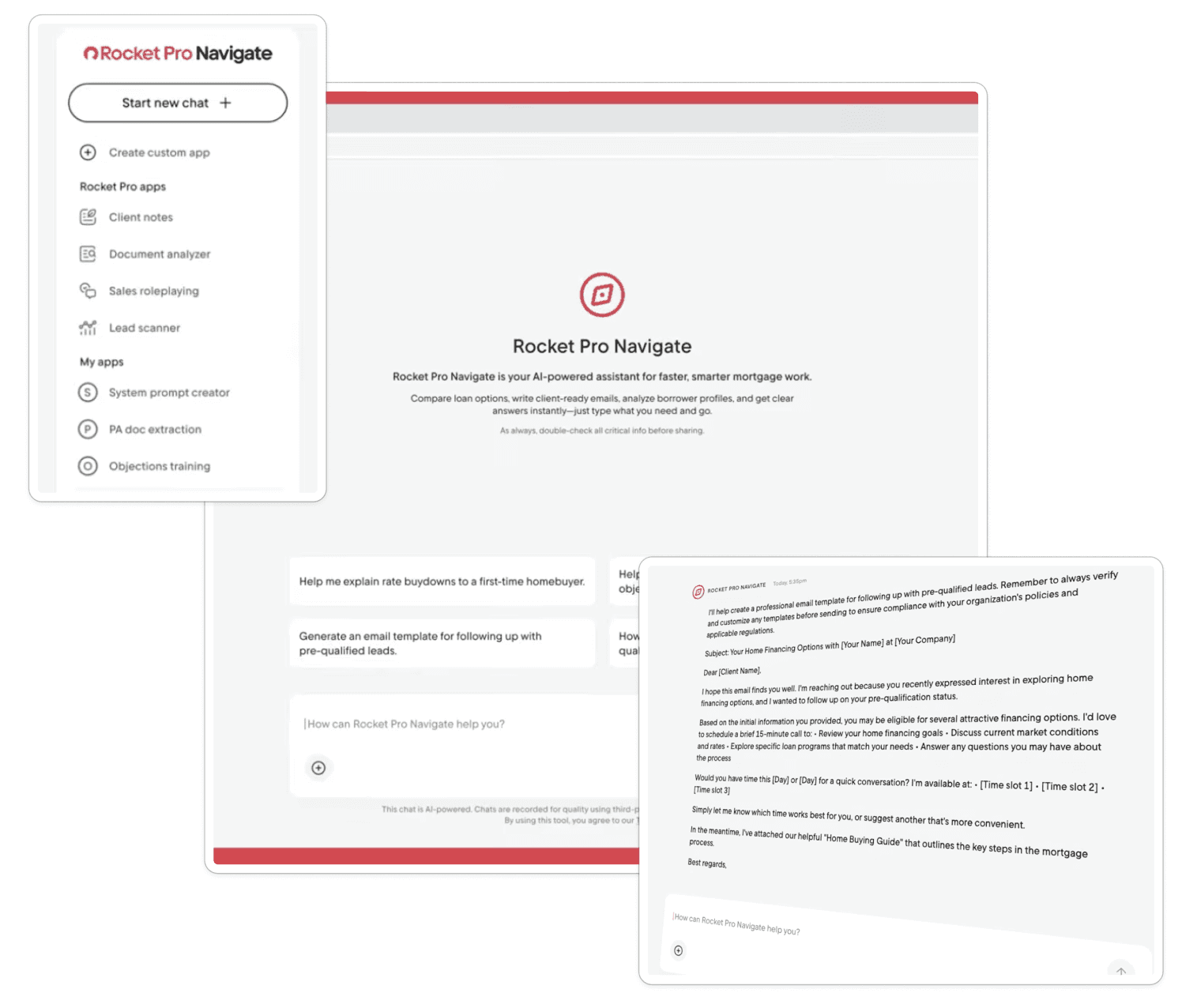

Meet Rocket Pro Navigate

Project Impact

Reframed the product strategy for Rocket Pro's suite of AI tools, directly prompting a team pivot in how conversational AI products are positioned and differentiated for brokers.

Contributed research to a unique LLM purpose-built for mortgage broker workflows. Pro Navigate is one of the first AI tools of its kind in the mortgage industry.

Adaptive research design protected the timeline by pivoting methods in response to legal and security constraints, ensuring usable insights were captured while minimizing release delays.

Two app recommendations shipped in the final product, features I identified through user interviews that made it into Pro Navigate's official October 2025 release

If you're interested in a deeper dive into this project, keep scrolling!

Otherwise, view my next case study, or jump back to the Home page

Research Deep Dive

Background

Rocket Pro Navigate was a fast, scrappy product pilot developed over 12 weeks. The idea of building an LLM to assist in mortgage broker workflows surfaced in week 3, I joined the project in week 4, and by week 8 we had a working experience ready for user testing.

The idea for Pro Navigate was introduced during a team meeting by the product manager as a potential pilot.

Joined the project after a coffee chat and began helping shape research direction, eventually leading research

Launched an MVP experience to validate broker interest. I conducted follow-up interviews to gather insights.

The core question was, if provided a Rocket-branded LLM to enhance mortgage brokers' workflows, would they use it and how? As the product rapidly evolved, research, design, and development shifted in parallel, making this an exercise in lean product management under real constraints. When the lead researcher went on PTO mid-project, I stepped in as the research point of contact, taking ownership of study planning, pivots, and stakeholder alignment to keep the pilot moving forward.

User Research Process and Plan

Research for Pro Navigate did not follow a textbook UX flow. We began with an evaluative mindset, largely because the product was mostly already built; it just needed tweaks to become external-facing and targeted towards mortgage brokers. We adapted our methods mid-project due to legal and security constraints, and shared insights continuously across teams to support rapid decision-making.

Evaluative First

Started with an existing product that needed tweaks before testing, minimizing early research, design, and development timelines

Mid-Project Pivot

Original diary study rapidly shifted to post-use interviews with a smaller testing sample due to legal and infosec limitations

Always-on Research

Research insights were shared live and during cross-functional meetings regularly to efficiently guide product decisions

Conducting research for this project was truly a delight for me because of how it gave me experience with a more rapid, iterative approach to products in a major company. My work with Rocket Pro Assist followed a much more textbook flow, which was exciting and informative in its own way, but Rocket Pro Navigate let me get my hands dirty conducting research that simultaneously had to be rigorous, but snappy. It was a fantastic exposure to the Lean Agile approach in a corporate setting.

Research Questions

Because this project was such a great collaborative effort, it took multiple meetings to define the roadmap and kick off the project. As discussions continued, we realized that we were running into the same questions that eventually became our research questions:

1.

What would mortgage brokers do with an LLM specifically designed to assist them?

2.

Would mortgage brokers use a Rocket-branded LLM trained to assist them in their daily workflows?

3.

After using Pro Navigate, what do mortgage brokers wish it could do for them?

Our research questions were longitudinal, following a sequential timeline just as our study would. Our first question was "the before" of wondering what users would do with an LLM designed for them, "the during" where we dig into whether or not they would actually use it, and finally "the after" where they reflect on their experience of what they wished it could do.

Soon, our meeting discussions went from the team discussing what Pro Navigate would be, to all of the things Pro Navigate needed to do, to the team deciding on a baseline functionality. This allowed us as researchers to jump on the opportunity to get real user insights as soon as possible with an MVP and then begin making informed decisions for iterations, rather than blindly building on a product that still needs validation.

Evolving the Research Approach

Legal and Infosec constraints required us to pivot away from our initial research plan: a diary study with ideally 30 participants, recruited by sales and from our broker panel. We could have no more than 7 to prevent issues with intellectual property or data breaches. These users would also be recruited as a micro-community, being from firms with which the product manager had experience conducting rapid research with.

Click to enlarge image

We simplified the approach to follow-up interviews after participants were granted access to Pro Navigate for a week and encouraged to integrate it into their workflows. Since this project was operating on a tight timeline, we could not afford the participant drop-off often associated with non-incentivized diary studies, nor the added legal and security exposure. This approach shortened the timeline and increased the likelihood of collecting usable data, even if it meant sacrificing some depth.

AI Experience Survey Sample Questions

As research participants were onboarded, we asked them to complete a survey to establish their background with AI. The survey was not anonymous, allowing us to connect responses directly to interview data. This helped us understand how varying levels of AI experience and sentiment might influence adoption of a new AI tool.

For about how long have you been using AI tools?

What AI tools have you used before?

Have you paid for a subscription to any of these tools?

On a scale from 1 - 5, where 1 = very negative and 5 = very positive, how would you rate your overall experience using AI tools?

After participants had a week of access to Pro Navigate, we met with them to gather their impressions of the tool. We intentionally provided no guidance, allowing us to observe whether and how they chose to engage with it on their own. This helped us identify gaps in the experience, assess performance, and understand whether the tool fit into their existing workflows at all.

What was your workload like this week? Was it typical, busier than usual, or slower?

How would you describe your overall experience using Pro Navigate?

How did you learn to use Pro Navigate during the week?

What specific tasks or questions did you use Pro Navigate for this week?

User Research Results

Study Participation Totals:

Survey Results

Interview Findings

From here on out, we returned our focus to just those who used Pro Navigate for the week. We received the following major insights from 4 users we were able to schedule interviews with. Synthesis was completed by affinity mapping the interview data in LucidChart.

3 out of 4

Felt this tool would be best suited for newer mortgage brokers

This was largely because it could help answer questions about guidelines, craft emails, and assist with cold calling, not so much with specific time-intensive tasks.

4 out of 4

Recommended more specific use cases for the average mortgage broker

Identifying paths forward for Navigator was a major goal of this research. By gathering commonalities in the suggestions, we could iterate for the next round of testing.

2 out of 4

Reported response inaccuracies, another reported privacy concerns

This revealed to us that we needed to refine the knowledge base. We also realized we needed clearer data storage info and settings for companies to control.

Reflections

Participants defaulted to use LLMs they were already familiar with and had data loaded into for general tasks.

There were data privacy concerns regarding the simple consent to use the tool without documentation outlining how the information is shared. This led to multiple users avoiding using the tool for more specific business cases.

Recommendations

Recommendation 1: Increase Upfront Utility Through Custom Apps

Research revealed that users had varying levels of trust and patience with AI. LLM-based tools require effort. Users must prompt, review, and refine, and if unsure of a tool’s capabilities or reliability, they may abandon it.

While suggested prompts and a small set of apps helped orient users, findings highlighted the value of custom apps designed for clear, targeted tasks that deliver immediate utility. Examples surfaced by users included lead scanning and cross-client note tracking.

Recommendation 2: Define Automation Goals as We Expand AI-Powered Experiences

One of our next steps should be defining what automation should accomplish for Rocket Pro users. By anchoring AI efforts to brokers’ real pain points, teams can explore building differentiated, task-focused AI experiences.

These capabilities could be surfaced through tools like Pro Navigate or Assist, using chat as an entry point rather than the end experience. Since LLMs are already the most familiar AI tool for many brokers, it's a strong starting point. However, by leveraging the extensive data we already have on broker workflows and pain points, we can move beyond what users know to ask for and deliver solutions that meaningfully reduce effort without battling with users' preconceived notions of an application. There is a lot of potential for a chat interface to better connect Rockets' products and knowledge bases, but its how we can build on top of it that will make a major difference.

Recommendation 3: Evaluate Unifying Rocket Pro’s Chat Experiences

While Rocket Pro Navigate and Rocket Pro Assist serve different purposes, user feedback on what to add to Navigate closely mirrored what we had previously heard for Assist. Because the two experiences are nearly identical in both interface and content, we identified a risk that users would struggle to understand when to use each tool.

This was largely because we, as a team, struggled to consistently define the difference. With the user experiences being so similar, we worried users would carry negative perceptions from one product into the other. This raised the question of whether unifying chat experiences across the Rocket Pro ecosystem could reduce confusion.

Outcomes

Rocket Pro Navigate was officially released on October 1st, 2025

I was ecstatic to see that the 2 applications I recommended adding to the product were included in the iterations made after the end of my internship.

Further, I had several discussions with Rocket Pro product managers about the conversational AI experience for brokers as a whole. While my recommendations largely encompassed the topics of these discussions, I am proud about how they factored into the product outcomes. In particular, we discussed:

If brokers have a bad experience with Rocket Pro Navigate or Rocket Assist, are we confident that they won't carry their bad experience or opinion from one product to another?

Do we believe that the difference between the products would be more clear for them than it is for us?

Knowing about brokers' AI usage and AI perceptions, what can we do to clearly differentiate our conversational AI products from those they find on other websites or LLMs like ChatGPT?

This prompted a shift in strategy that has not been made public and I cannot share due to an NDA, but it helped re-contextualize how we saw these products as a part of the broker user experience.

Reflection

What I Owned:

This was the project I learned the most from. Working with fast-moving, constantly shifting requirements showed me what research actually looks like outside of a classroom, and I rose to it. I became the point of contact, the person in product meetings whose input was sought when findings could resolve a disagreement. By the end of my internship, the product manager told me he had forgotten I was even an intern. That meant everything.

What I’d Change:

The biggest structural lesson was the value of upfront discovery research, not just for individual tools, but for an entire strategy. Because I was simultaneously researching another AI product in the same broker space, I kept running into the same foundational gaps: we didn't have a baseline understanding of how brokers think about AI. What do they use it for? What have they tried and abandoned? Without that foundation, we were designing from assumptions rather than insight. Proper benchmarking research at the start could have changed our entire approach.

In terms of methods, I would have pushed back on the diary study earlier. Without incentives and with 30 proposed participants under a tight deadline, the risk of uncontrolled drop-off was too high. I'd have proposed a longitudinal interview series instead. This would include two structured interviews per participant, bookending a two-week usage period to preserve qualitative richness while asking far less of participants. Even after our participant count dropped significantly, I still believe this structure would have served us better. The post-use interviews I conducted after a week of tool access were a step in that direction, but ghosting remained a persistent issue. In hindsight, I would have investigated incentivizing the interviews given our reduced sample size, and made completing the background survey a prerequisite for participation to keep interviews more focused on the tool experience rather than covering foundational context mid-session.

What I’ll Carry Forward:

What I'll carry forward most is the confidence to advocate for the right research approach even under pressure, and the understanding that our job isn't just to ask users what they want, but to understand them well enough to know what they actually need.

I also will carry with me an understanding of how research really works, it's messy at times and things change fast. By staying tuned in and being proactive, research really has an opportunity to be the calm in the storm to keep things moving along, even if it's slower or smaller scale than initially desired. One of the most important aspects of this is having the right team culture. I was incredibly grateful for the group of people I got to work with on this project. Even if there were twists and turns, there was a strong expectation of supporting one another and jumping in when it counted.